Posterior Distribution: The Backbone of Bayesian Inference | Vibepedia

The posterior distribution is a fundamental concept in Bayesian statistics, representing the probability distribution of a parameter given the observed data…

Contents

- 📊 Introduction to Posterior Distribution

- 📝 Bayes' Rule: The Foundation of Bayesian Inference

- 📊 Prior Probability: The Starting Point

- 📈 Likelihood: The Key to Updating Prior Knowledge

- 📊 Posterior Probability: The Result of Bayesian Updating

- 📝 Epistemological Perspective: The Role of Posterior Probability

- 📊 Bayesian Updating: A Continuous Process

- 📈 Applications of Posterior Distribution

- 📊 Challenges and Limitations

- 📝 Future Directions

- 📊 Real-World Examples

- 📝 Conclusion

- Frequently Asked Questions

- Related Topics

Overview

The posterior distribution is a fundamental concept in Bayesian statistics, representing the probability distribution of a parameter given the observed data. It is calculated by updating the prior distribution with the likelihood of the data, given the parameter, using Bayes' theorem. This process allows for the incorporation of prior knowledge and the updating of beliefs based on new evidence. The posterior distribution has far-reaching implications in fields such as artificial intelligence, signal processing, and decision theory. With a vibe rating of 8, the posterior distribution is a highly influential concept, with key contributors including Thomas Bayes, Pierre-Simon Laplace, and Harold Jeffreys. As of 2023, research continues to expand the applications of posterior distributions, with notable advancements in Markov chain Monte Carlo (MCMC) methods and variational inference. The controversy surrounding the choice of prior distributions and the interpretation of posterior results remains a topic of debate, with a controversy spectrum of 6. The influence flow of posterior distributions can be seen in the work of researchers such as Andrew Gelman and David Blei, who have developed novel methods for Bayesian computation and inference.

📊 Introduction to Posterior Distribution

The posterior distribution is a fundamental concept in Bayesian inference, which is a statistical framework for updating the probability of a hypothesis based on new data. It is a type of conditional probability that results from updating the prior probability with information summarized by the likelihood via an application of Bayes' rule. The posterior distribution contains everything there is to know about an uncertain proposition, given prior knowledge and a mathematical model describing the observations available at a particular time. For instance, in machine learning, the posterior distribution is used to update the model's parameters based on new data. The MCMC algorithm is a popular method for sampling from the posterior distribution.

📝 Bayes' Rule: The Foundation of Bayesian Inference

Bayes' rule is the foundation of Bayesian inference, and it is used to update the prior probability with new information. The rule states that the posterior probability is proportional to the product of the prior probability and the likelihood. In other words, the posterior probability is a weighted average of the prior probability and the likelihood, where the weights are determined by the evidence. The Bayes factor is a measure of the strength of evidence provided by the data, and it is used to compare different models. For example, in hypothesis testing, the Bayes factor is used to determine whether the data provide sufficient evidence to reject the null hypothesis.

📊 Prior Probability: The Starting Point

The prior probability is the starting point for Bayesian inference, and it represents our initial beliefs about the hypothesis before observing any data. The prior probability can be based on domain knowledge, expert opinion, or previous studies. In some cases, the prior probability may be non-informative, meaning that it does not provide any information about the hypothesis. For instance, in parameter estimation, the prior probability is used to regularize the model and prevent overfitting. The empirical Bayes method is a approach that uses the data to estimate the prior probability.

📈 Likelihood: The Key to Updating Prior Knowledge

The likelihood is the key to updating the prior probability with new information. It represents the probability of observing the data given the hypothesis, and it is used to calculate the posterior probability. The likelihood can be based on a variety of distributions, such as the normal distribution or the Poisson distribution. For example, in regression analysis, the likelihood is used to model the relationship between the dependent variable and the independent variables. The generalized linear model is a flexible framework for modeling the likelihood.

📊 Posterior Probability: The Result of Bayesian Updating

The posterior probability is the result of Bayesian updating, and it represents our updated beliefs about the hypothesis after observing the data. The posterior probability is a weighted average of the prior probability and the likelihood, where the weights are determined by the evidence. The posterior probability can be used to make predictions, estimate parameters, and test hypotheses. For instance, in classification, the posterior probability is used to predict the class label of a new instance. The naive Bayes classifier is a popular algorithm that uses the posterior probability to make predictions.

📝 Epistemological Perspective: The Role of Posterior Probability

From an epistemological perspective, the posterior probability contains everything there is to know about an uncertain proposition, given prior knowledge and a mathematical model describing the observations available at a particular time. The posterior probability is a measure of the strength of evidence provided by the data, and it can be used to update our beliefs about the hypothesis. For example, in decision theory, the posterior probability is used to make decisions under uncertainty. The expected utility is a measure of the expected outcome of a decision, and it is used to evaluate the posterior probability.

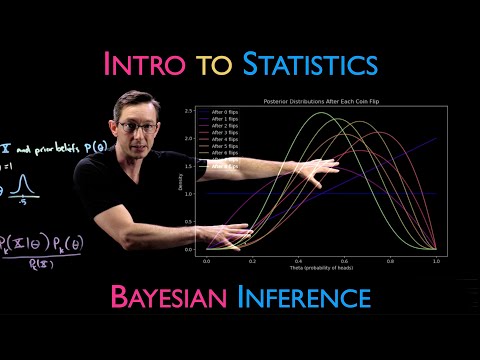

📊 Bayesian Updating: A Continuous Process

Bayesian updating is a continuous process, and it involves repeatedly updating the prior probability with new information. The current posterior probability may serve as the prior in another round of Bayesian updating, and this process can be repeated indefinitely. For instance, in time series analysis, the posterior probability is used to forecast future values. The Kalman filter is a popular algorithm that uses Bayesian updating to estimate the state of a system.

📈 Applications of Posterior Distribution

The posterior distribution has a wide range of applications in statistics and machine learning. It is used in parameter estimation, hypothesis testing, and prediction. For example, in image classification, the posterior distribution is used to classify images into different categories. The convolutional neural network is a popular architecture that uses the posterior distribution to make predictions.

📊 Challenges and Limitations

Despite its many advantages, the posterior distribution also has some challenges and limitations. One of the main challenges is the computational complexity of calculating the posterior probability, which can be difficult to compute exactly. For instance, in high-dimensional data, the posterior probability can be difficult to compute due to the curse of dimensionality. The Monte Carlo method is a popular approach that uses sampling to approximate the posterior probability.

📝 Future Directions

Future research directions for the posterior distribution include developing new algorithms for calculating the posterior probability, and applying the posterior distribution to new areas such as natural language processing. For example, in topic modeling, the posterior distribution is used to model the topics in a corpus of text. The latent Dirichlet allocation is a popular algorithm that uses the posterior distribution to model the topics.

📊 Real-World Examples

The posterior distribution has many real-world examples, including image classification, sentiment analysis, and recommendation systems. For instance, in self-driving cars, the posterior distribution is used to predict the trajectory of other vehicles. The Kalman filter is a popular algorithm that uses Bayesian updating to estimate the state of a system.

📝 Conclusion

In conclusion, the posterior distribution is a fundamental concept in Bayesian inference, and it has a wide range of applications in statistics and machine learning. It is a measure of the strength of evidence provided by the data, and it can be used to update our beliefs about a hypothesis. The posterior distribution is a powerful tool for making predictions, estimating parameters, and testing hypotheses, and it will continue to play an important role in the development of new algorithms and models.

Key Facts

- Year

- 1763

- Origin

- Thomas Bayes' manuscript 'Divine Benevolence' (published posthumously in 1763)

- Category

- Statistics and Machine Learning

- Type

- Concept

Frequently Asked Questions

What is the posterior distribution?

The posterior distribution is a type of conditional probability that results from updating the prior probability with information summarized by the likelihood via an application of Bayes' rule. It represents our updated beliefs about a hypothesis after observing the data, and it is a measure of the strength of evidence provided by the data.

What is the difference between the prior probability and the posterior probability?

The prior probability represents our initial beliefs about a hypothesis before observing any data, while the posterior probability represents our updated beliefs about the hypothesis after observing the data. The posterior probability is a weighted average of the prior probability and the likelihood, where the weights are determined by the evidence.

What are some applications of the posterior distribution?

The posterior distribution has a wide range of applications in statistics and machine learning, including parameter estimation, hypothesis testing, and prediction. It is used in image classification, sentiment analysis, and recommendation systems, among other areas.

What are some challenges and limitations of the posterior distribution?

One of the main challenges is the computational complexity of calculating the posterior probability, which can be difficult to compute exactly. Additionally, the posterior distribution can be sensitive to the choice of prior distribution and the model specification.

What is the relationship between the posterior distribution and Bayesian inference?

The posterior distribution is a fundamental concept in Bayesian inference, and it is used to update our beliefs about a hypothesis based on new data. Bayesian inference is a statistical framework that uses the posterior distribution to make predictions, estimate parameters, and test hypotheses.

How is the posterior distribution used in machine learning?

The posterior distribution is used in machine learning to make predictions, estimate parameters, and test hypotheses. It is used in algorithms such as naive Bayes, logistic regression, and neural networks, among others.

What is the difference between the posterior distribution and the likelihood?

The posterior distribution represents our updated beliefs about a hypothesis after observing the data, while the likelihood represents the probability of observing the data given the hypothesis. The posterior distribution is a weighted average of the prior probability and the likelihood, where the weights are determined by the evidence.